Assistive Agent Optimization: How AI Decides What to Recommend

Your Content Ranks on Page One. AI Does Not Know It Exists.

You published a well-researched article last week. It ranks in the top five on Google. Your analytics show steady organic traffic. And yet, when someone asks ChatGPT, Perplexity, or Google’s AI Overview about the exact topic you covered, your content is nowhere in the answer.

This is not a ranking failure. It is a pipeline failure. The systems that determine what AI agents recommend operate on different rules than the systems that determine what Google ranks. A page can be a top organic result and still be invisible to every AI assistant on the market.

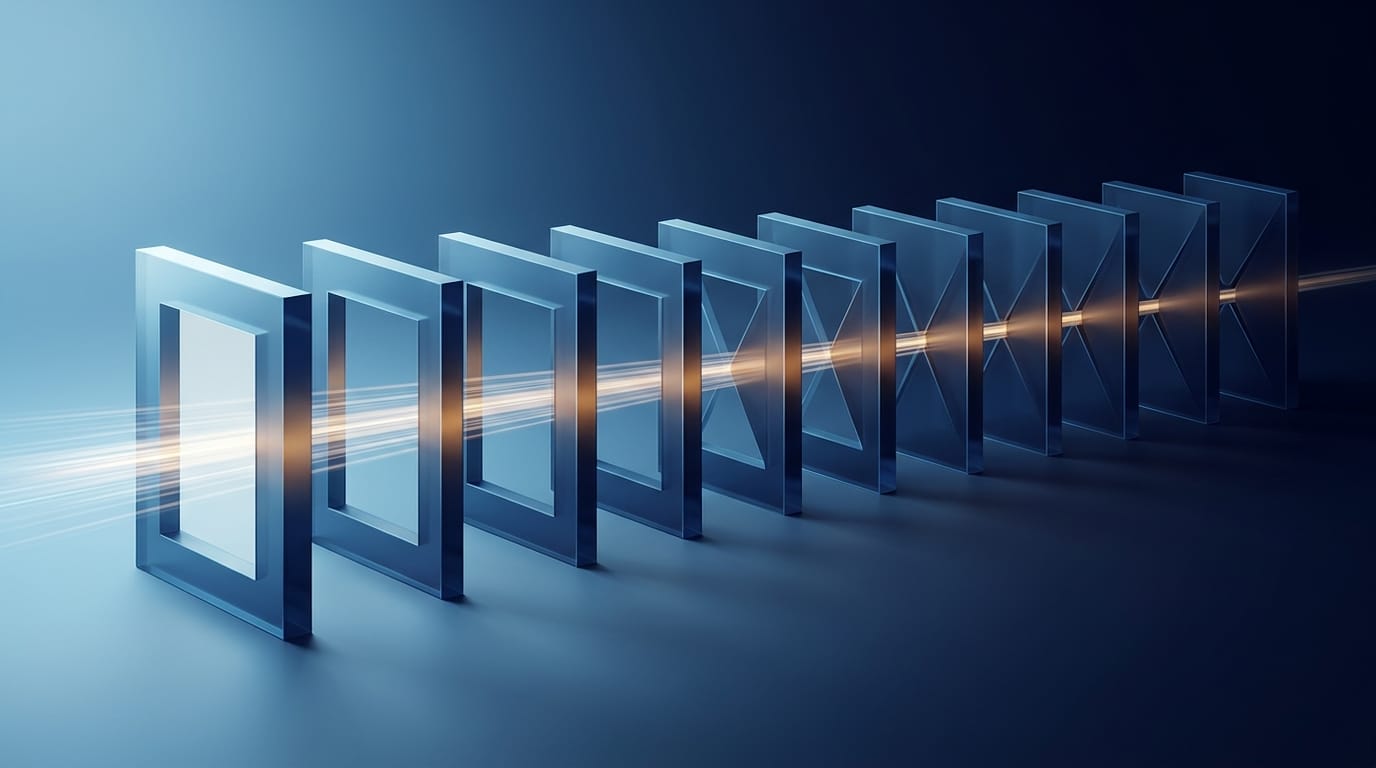

The framework that explains why is called Assistive Agent Optimization, or AAO. It maps 10 sequential gates your content must pass before an AI agent will recommend it. Fail at any single gate, and everything downstream stops.

From Rankings to Recommendations

For 25 years, SEO taught us to optimize for search engines. That worked because the user saw 10 results and chose. The search engine ranked pages. The human decided.

AI agents work differently. When someone asks an AI assistant for a recommendation, the assistant delivers one answer. It has already decided. The human sees the output of a decision that was made for them.

This shift from ranking pages to recommending solutions is what separates traditional search optimization from Assistive Agent Optimization. Jason Barnard’s DSCRI-ARGDW pipeline maps the exact mechanics of how that decision happens, gate by gate, across a 10-stage processing chain.

The 10-Gate Pipeline

Every piece of content passes through 10 gates before an AI agent recommends it. Each gate is binary: your content either passes or stalls. Content that stalls at gate 3 never reaches gate 4. It does not matter how strong your content would perform at gates 5 through 10.

The pipeline divides into three acts. Each act has a different audience you are marketing to: the bot, the algorithm, and the person. These audiences are nested. You can only reach the algorithm through the bot, and you can only reach the person through the algorithm.

Act I: Retrieval — Can the Machine Find and Read Your Content?

The first four gates serve the bot. No quality judgments happen here. The only question is whether the system can physically access and process what you published.

Gate 1: Discovery. The system learns your content exists. Sitemaps, internal links, and active signals like IndexNow tell crawlers where to look. Content that nothing points to may never enter the pipeline.

Gate 2: Selection. Finding your content is not the same as deciding to fetch it. Bots allocate limited processing budgets based on signals like entity authority and publishing consistency. A domain with no track record faces a cold-start problem here.

Gate 3: Crawling. The bot arrives and requests your page. Your server needs to respond quickly with clean content. Broken links, redirect chains, and slow server response times end the pipeline at this gate.

Gate 4: Rendering. The bot converts what it fetched into its internal format. This is the gate where most websites silently fail. I explain why below.

Act II: Storage — Does the Machine Understand What It Collected?

Gates 5 through 7 serve the algorithm. Your content has been collected. Now the system needs to understand it, classify it, and decide whether it is worth using.

Gate 5: Indexing. The system commits your content to memory. Canonical resolution, duplicate handling, and clean structure determine whether your content earns a stable place in the index. Indexing is storage: the system saves what it rendered.

Gate 6: Annotation. The indexed content is classified across 24 or more dimensions, including topic associations, entity relationships, claim structures, and credibility markers. Structured data in JSON-LD format directly improves classification accuracy. Without proper annotation, your content is a black box the algorithm cannot match to queries.

Gate 7: Recruitment. The first explicitly competitive gate. A component of the algorithm decides: is this content worth using over the alternatives? Multiple sources can be recruited for different aspects of a topic, but the algorithm recruits one source over another for a given aspect. Being indexed is not enough. Being chosen over the competition is the threshold.

Act III: Execution — Will the Person Act on What AI Recommends?

Gates 8 through 10 serve the person, through the algorithm. This is where machine confidence meets a human and resolves into a binary outcome.

Gate 8: Grounding. The AI cross-checks your claims against external sources. When an AI assistant generates a response, it verifies its confidence by querying multiple sources from multiple angles. Content with higher entity and annotation confidence is more likely to be retrieved and trusted during grounding. This is where third-party corroboration across authoritative sources makes or breaks your inclusion in the answer.

Gate 9: Display. The pipeline pivots. For the first time, there is a person. The algorithm presents a finite set of recommendations, often just one. The person can only see what the algorithm chose to show. How the AI frames and quotes your content matters here: writing structure and citation quality determine whether you are quoted directly or paraphrased without attribution.

Gate 10: Won. Binary outcome. One brand wins. Every competitor loses. The person either acts on the recommendation or they do not. Won is not conversion rate optimization in the traditional sense. It is the zero-sum resolution of the entire pipeline.

The Math That Makes This Unforgiving

Here is where intuition fails.

If each gate passes at 90 percent confidence, the end-to-end survival rate is not 90 percent. It is 34.9 percent.

The formula is multiplicative. Confidence at each gate does not add — it multiplies. The general form for end-to-end survival across gates is:

where is the pass rate at gate . When every gate shares a uniform pass rate , this simplifies to:

At 10 gates with 90 percent confidence per gate:

Only 34.9 percent of content survives.

| Pass Rate Per Gate | End-to-End Survival |

|---|---|

| 99% | |

| 95% | |

| 90% | |

| 80% |

Jason Barnard calls this Won-Probability arithmetic. The practical consequence is counterintuitive. Consider a brand with 100 percent at 8 gates but 50 percent at 2 gates:

Compare that to a brand with a consistent 99 percent across all 10 gates, which achieves 90.4 percent. The cascade rewards consistency across the full pipeline over excellence at isolated stages.

The bottleneck principle. Improving a weak gate has disproportionate impact. If your weakest gate sits at and you raise it to , your overall survival increases by a factor of . Raising a strong gate from to gains only . Your weakest gate is always the highest-leverage fix.

The Rendering Gate: Where Most Websites Silently Fail

Gate 4 deserves its own section because it is the single biggest undiagnosed failure point in AI visibility.

The problem: most AI bots do not execute JavaScript.

When you visit a modern website built with React, Angular, Vue, or any similar framework, your browser downloads a small HTML file and then runs code to build the actual page content. Everything you see appeared because your browser executed that code.

Google accommodates this. Googlebot has a rendering engine that executes JavaScript, waits for the page to load, and then indexes the result. This is why JavaScript-heavy sites can still rank well in Google.

ChatGPT’s crawler, Perplexity’s crawler, and most other AI bots do not do this. They fetch the HTML and read what is there. If the content requires JavaScript to appear, these bots see an empty page.

This means a website built as a single-page application with client-side rendering can simultaneously: rank on page one of Google, receive strong organic traffic, and be functionally invisible to AI assistants.

Barnard introduces the concept of Conversion Fidelity at this gate: how cleanly your content survives the bot’s transformation into its internal format. Bing’s Principal Programme Manager Fabrice Canel confirmed that “conversion fidelity is real” and that “the internal index is not the HTML.” Rendering quality at gate 4 directly affects what the algorithm sees at every downstream gate.

The fix is server-side rendering or static site generation, where the server sends complete HTML without requiring JavaScript execution. This is an infrastructure decision, but the business impact is binary: either AI agents can read your content or they cannot.

The Circle: Why Won Feeds Back to Discovery

The pipeline appears to be a straight line from gate 1 to gate 10. It is not. It is a circle.

When a person acts on an AI recommendation (Won), that signal feeds back into entity confidence. Higher entity confidence makes the bot more likely to prioritize your next piece of content at Discovery. The algorithm processes it with higher trust. The next recommendation comes with more confidence.

Every successful conversion strengthens the next one. Every failure weakens it. This means the first content you push through all 10 gates creates a compounding advantage. Organizations that build this flywheel early pull away from competitors at an accelerating rate.

It also means bottom-of-funnel must come first. If your conversion experience is broken, every successful recommendation generates a negative feedback signal. You spend Cascading Confidence faster than you earn it. Fix the conversion before investing in visibility.

What This Means for Your Business

The DSCRI-ARGDW pipeline is not academic theory. It is the mechanical reality of how AI-driven recommendations work. Three practical actions follow from it.

Audit your rendering gate first. If your website uses a JavaScript framework with client-side rendering, you may have a binary problem that no amount of content quality, schema markup, or keyword optimization can overcome. Disable JavaScript in your browser and check whether your pages display their content. If they do not, this is your highest-priority fix.

Fix your weakest gate, not your strongest. The multiplicative model means that improving a gate from 50 percent to 75 percent delivers more pipeline impact than improving one from 85 percent to 95 percent. Run the sequential gating diagnostic: start at gate 1 and work forward until you find the first gate that fails. That is where your investment belongs.

Build the flywheel early. The feedback loop from Won to Discovery means the first brand to push content through all 10 gates earns a compounding advantage. Every successful recommendation makes the next one more likely. Every month you wait is a month your competitors are building entity confidence that you will have to overcome.

Frequently Asked Questions

Does Assistive Agent Optimization replace SEO?

No. AAO contains SEO. Every SEO skill you have built still applies. The DSCRI-ARGDW pipeline includes traditional search indexing (gates 1 through 5) as a subset of the full 10-gate process. A Semrush study found that 96 percent of AI Overview citations come from sources with strong E-E-A-T signals, which are the same signals traditional SEO has always targeted. You need both.

My site ranks well on Google. Why would AI agents not see it?

The most common cause is the rendering gate. Google’s crawler executes JavaScript and renders your page. Most AI crawlers do not. If your site uses a JavaScript framework that renders content on the client side, Google sees the full page while AI bots see an empty shell. The site ranks because Google can process it. AI agents ignore it because they cannot.

How do I know which gate is my weakest?

Start from gate 1 and work forward. Infrastructure gates (1 through 4) are binary and testable: is your content in sitemaps? Does it render without JavaScript? Does the server respond quickly? Storage gates (5 through 7) require checking whether your content is indexed, properly classified with structured data, and being considered for relevant queries. Competitive gates (8 through 10) require analyzing whether AI platforms are citing your content when answering relevant questions.

Does this apply to small businesses or only large enterprises?

The pipeline does not care about company size. It cares about gate pass rates. A small business with clean rendering, strong structured data, and consistent entity signals across the web can outperform a large enterprise whose JavaScript-heavy site fails at gate 4. The infrastructure gates are the great equalizer.

How quickly should I expect results from fixing pipeline gates?

Infrastructure fixes (rendering, sitemaps, server speed) can show impact within weeks as AI crawlers reprocess your content. Storage and competitive improvements (structured data, entity authority, content quality) take longer because they depend on the AI systems refreshing their understanding of your domain. The compounding flywheel means early improvements create accelerating returns over time.

Find Out Where Your Pipeline Is Failing

Most organizations do not know which gate is killing their AI visibility. They invest in content quality while their rendering gate is at zero. They build backlinks while their structured data is missing. They optimize for keywords while their entity signals are absent from two of the three graphs.

I built andres.plashal.com on an SSG architecture that scores above 95 percent at every infrastructure gate. I have implemented the full DSCRI-ARGDW diagnostic across client sites in financial services, SaaS, and professional services. The pattern is consistent: one or two gates account for nearly all the confidence loss, and fixing them produces outsized returns before any content investment begins.

Here is what I offer:

Pipeline diagnostic. A gate-by-gate audit of your site against all 10 stages. You get a scorecard showing exactly where confidence is leaking, which gate is your bottleneck, and what the fix costs. The rendering gate check alone takes 15 minutes and tells you whether your entire content pipeline has a structural problem that no amount of content quality can overcome.

Infrastructure remediation. If your site fails at gates 1 through 4, I fix the technical foundation: server-side rendering, structured data implementation, sitemap architecture, and IndexNow integration. This is the highest-ROI work in the pipeline because it converts binary failures into passing gates.

Competitive positioning. For organizations that pass infrastructure but stall at the competitive gates, I build the entity authority, content architecture, and cross-graph corroboration strategy that earns AI recommendations over your competitors.

Stop publishing content that AI cannot see.

Get a pipeline diagnostic that shows exactly which gates are failing and what to fix first. The rendering gate check is complimentary.