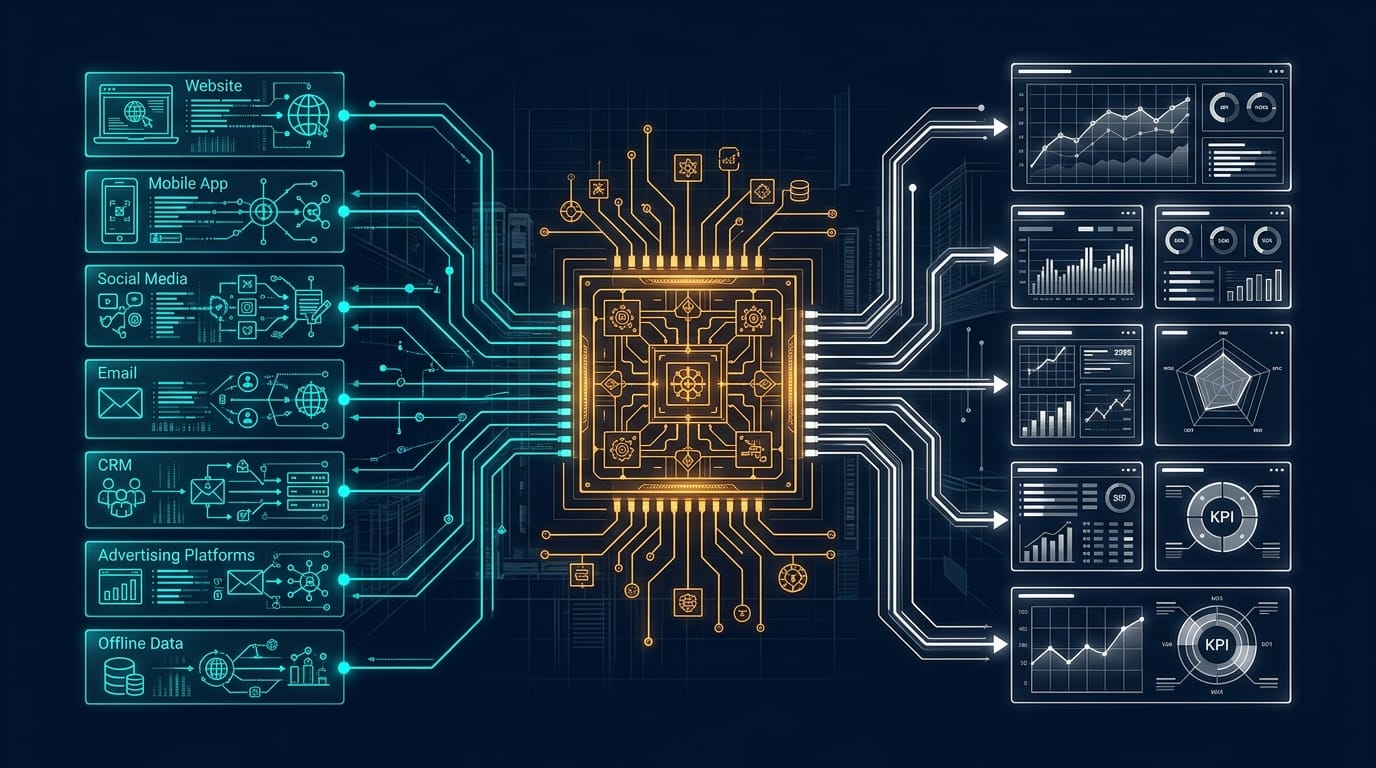

Cross-Channel Analytics Architecture

Cross-channel analytics architecture is the structural design that connects every marketing data source into a single, queryable system of record. Without it, your marketing team operates on fragmented truths, where each platform tells a different story about the same customer.

I have spent two decades building marketing measurement systems, and the pattern is always the same. Organizations collect data from a dozen platforms, export CSVs into spreadsheets, and then argue in meetings about whose numbers are right. The problem is never the data itself. The problem is the architecture that fails to unify it.

This guide walks through the engineering required to build a unified analytics layer connecting GA4, your CRM, and BigQuery into a single source of truth that your entire organization can trust.

Why Siloed Analytics Tools Create Measurement Gaps

Every marketing platform reports on its own terms. GA4 uses event-based sessions. Your CRM tracks lifecycle stages. Meta counts view-through conversions that Google ignores entirely. When you pull numbers from each tool independently, you are assembling a puzzle where every piece comes from a different box.

The financial impact of this fragmentation is staggering. According to IDC research, data silos cost the global economy $3.1 trillion annually. At the organizational level, Gartner reports that bad data costs companies an average of $12.9 million per year. These are not abstract losses. They show up as misallocated budgets, missed opportunities, and campaigns that get credit they do not deserve.

The measurement gaps created by siloed tools fall into three categories.

Attribution conflicts. GA4 credits the last non-direct click. Your CRM credits the first touch that created the contact. Your paid media team reports platform-attributed conversions that double-count the same sale. Without a unified layer, your team cannot answer the fundamental question: what actually drove this revenue?

Audience fragmentation. A prospect visits your site (GA4 event), downloads a whitepaper (CRM form submission), and later converts through a paid search ad (Google Ads). Each system knows part of the story. None of them know the whole journey. MuleSoft’s 2025 Connectivity Benchmark found that organizations average 897 applications but only 29% are integrated, creating massive blind spots in customer understanding.

Reporting latency. When analysts spend hours manually reconciling data across platforms, decisions slow down. Forrester research found that knowledge workers spend an average of 12 hours per week chasing data across disconnected systems. At a $75,000 annual salary, that is over $1,500 per month in lost productivity for a single employee.

The solution is not another dashboard that aggregates screenshots from five tools. The solution is an architecture that centralizes raw data, transforms it into consistent schemas, and serves it through a single query interface.

Designing a GA4 + CRM + BigQuery Unified Layer

The unified analytics layer follows a hub-and-spoke model. BigQuery serves as the central warehouse. GA4 and your CRM feed raw event and contact data into it. Transformation logic standardizes everything into shared dimensions and metrics. Visualization tools query the warehouse directly.

Here is the architectural blueprint.

Layer 1: Data Ingestion

GA4 offers a native BigQuery export that streams event data directly into your warehouse. This is the foundation of your architecture and one of the strongest arguments for choosing BigQuery as your marketing data warehouse. Over 14.2 million websites now use GA4, and the free BigQuery export means every one of them can access raw, unsampled event data without paying for GA360.

For your CRM, you need a connector tool. Fivetran, Airbyte, and Stitch all offer pre-built connectors for major CRM platforms that replicate contacts, deals, engagements, and marketing events into BigQuery on a scheduled cadence. The key requirement is incremental sync capability, so you are loading only changed records rather than full table replacements on every run.

Additional spokes can include your ad platforms (Google Ads, Meta Ads, LinkedIn Ads), email service providers, and any other system that holds marketing-relevant data. Each spoke connects to the BigQuery hub through its own ingestion pipeline.

Layer 2: Raw Data Storage

Raw data lands in a dedicated BigQuery dataset, often named raw or staging. This dataset mirrors the source schemas exactly. You do not transform data at this stage. The purpose is to preserve a complete, auditable record of everything each source system sent.

This separation matters for debugging. When a transformation produces unexpected results, you can always trace back to the raw data and verify whether the issue originated at the source or in your logic.

Layer 3: Transformation and Modeling

This is where the architecture delivers its value. Raw GA4 events, CRM contacts, and ad platform spend data get transformed into a unified schema with consistent naming conventions, shared dimension keys, and standardized metrics.

The industry-standard tool for this layer is dbt (data build tool). dbt lets you write SQL-based transformations that are version-controlled, tested, and documented. Your transformation models typically follow this hierarchy:

- Staging models clean and rename raw columns into consistent formats

- Intermediate models join data across sources and calculate derived metrics

- Mart models produce the final tables that dashboards and analysts query

A practical example: your staging layer normalizes GA4’s event_timestamp and your CRM’s native timestamp into a shared event_at column using UTC. Your intermediate layer joins GA4 sessions with CRM contacts using a shared user identifier (typically the client ID stored in a hidden form field at the point of conversion). Your mart layer produces a fct_conversions table that attributes revenue across touchpoints using your chosen model.

Layer 4: Serving and Visualization

The final layer connects your warehouse to the tools your team actually uses. Looker Studio (free), Looker, Tableau, or Power BI can all query BigQuery directly. The critical principle here is that dashboards should query the mart layer only. Analysts who need deeper exploration access the intermediate or staging layers through SQL.

This architecture ensures that every person in the organization, from the CMO reviewing the monthly board deck to the paid media specialist optimizing daily bids, is working from the same numbers.

ETL vs. ELT Pipeline Patterns for Marketing Data

The pipeline that moves data from source systems into your warehouse follows one of two patterns: ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform). For marketing data warehouses built on BigQuery, Google Cloud recommends ELT as the primary pattern.

Why ELT Wins for Marketing Data

ELT loads raw data into the warehouse first, then transforms it using the warehouse’s own compute power. This approach has three advantages for marketing teams.

Speed to insight. Data arrives in the warehouse within minutes of extraction. Transformations run on schedule or on-demand. When a campaign launches and you need same-day performance data, ELT delivers without waiting for an external transformation server to process the batch.

Flexibility. Marketing questions change constantly. With ELT, your raw data is always available for new transformations without re-extracting from source systems. When the VP of Marketing asks a question nobody anticipated, your analyst can write a new dbt model against existing raw data rather than requesting a new data feed.

Cost efficiency. BigQuery’s serverless pricing model means you pay for queries, not idle compute. Running transformations inside BigQuery eliminates the need for a separate transformation server, reducing infrastructure complexity and cost.

When ETL Still Makes Sense

ETL transforms data before loading it into the warehouse. This pattern remains useful when you need to filter out sensitive data (PII scrubbing before it enters the warehouse), reduce data volume (aggregating high-frequency event streams), or standardize formats from legacy systems with unreliable schemas.

Most mature marketing data architectures use a hybrid approach. ETL handles compliance-sensitive pipelines where PII must be removed before warehouse entry. ELT handles everything else.

Pipeline Orchestration

Your pipelines need a scheduler. For simple architectures, dbt Cloud’s built-in scheduler handles transformation orchestration. For complex multi-source architectures, Apache Airflow or Prefect provide the DAG-based (directed acyclic graph) orchestration needed to coordinate extraction, loading, and transformation across dozens of data sources with dependency management.

The orchestration layer should include alerting for pipeline failures. When a CRM sync fails at 3 AM, your team needs to know before the morning standup, not after the CMO asks why the dashboard looks wrong.

Data Warehouse Schema Design for Marketing Teams

Schema design determines whether your warehouse becomes a strategic asset or an expensive data swamp. Marketing data warehouses follow a dimensional modeling approach with fact and dimension tables organized into a star schema.

Core Fact Tables

fct_sessions. One row per website session, sourced from GA4 events. Includes session start time, landing page, traffic source, device category, and session-level engagement metrics (pages viewed, events triggered, session duration). This table powers acquisition reporting.

fct_conversions. One row per conversion event, joining GA4 conversion events with CRM deal creation. Includes conversion timestamp, conversion type (lead, MQL, SQL, closed-won), attributed revenue, and the traffic source that receives credit. This table powers ROI reporting.

fct_spend. One row per day per campaign per platform, sourced from ad platform APIs. Includes impressions, clicks, cost, and platform-reported conversions. This table powers efficiency reporting and joins with fct_conversions for true ROAS calculations.

fct_engagements. One row per marketing interaction, sourced from your CRM. Includes email opens, email clicks, form submissions, meeting bookings, and content downloads. This table powers nurture and lifecycle reporting.

Core Dimension Tables

dim_contacts. One row per known contact, sourced from your CRM. Includes lifecycle stage, lead source, company, industry, and any custom properties relevant to your business.

dim_campaigns. One row per campaign, combining data from GA4 UTM parameters and ad platform campaign metadata. Includes campaign name, channel, objective, start date, end date, and budget.

dim_content. One row per content asset (blog post, landing page, downloadable resource). Includes title, author, publish date, content type, and topic cluster.

dim_date. A standard date dimension table with fiscal periods, week numbers, and quarter boundaries aligned to your organization’s reporting calendar.

The Stitching Problem

The hardest engineering challenge in marketing data warehouses is identity resolution. GA4 tracks anonymous client IDs. Your CRM tracks known email addresses. The bridge between them is the moment a visitor identifies themselves, typically through a form submission.

Your architecture needs an identity graph that maps GA4 client IDs to CRM contact IDs. The simplest implementation captures the GA4 client ID in a hidden form field at the point of conversion. More sophisticated implementations use a Customer Data Platform (CDP) to maintain a persistent identity graph across all touchpoints.

Without identity stitching, your warehouse contains two disconnected datasets: anonymous behavior and known contacts. With it, you can trace the complete journey from first anonymous visit through closed revenue, which is the entire point of building this architecture.

Building Dashboards That Reflect Reality

A unified warehouse is useless if the dashboards built on top of it perpetuate the same confusion that siloed tools created. Dashboard design is an information architecture problem, not a visualization problem.

The Dashboard Hierarchy

Effective marketing analytics follows a three-tier dashboard hierarchy.

Executive dashboards answer one question: is marketing driving business results? These dashboards show pipeline generated, revenue attributed, CAC by channel, and trend lines. Refresh cadence: weekly. Audience: C-suite and board.

Manager dashboards answer: where should we invest more or less? These show channel-level performance, campaign comparisons, funnel conversion rates, and budget pacing. Refresh cadence: daily. Audience: marketing directors and channel leads.

Analyst dashboards answer: why is this happening? These show granular event data, cohort analysis, A/B test results, and anomaly detection. Refresh cadence: real-time or hourly. Audience: analysts and data scientists.

Each tier queries the same warehouse, ensuring numbers roll up consistently. The executive dashboard showing $500K in pipeline this quarter should reconcile exactly with the sum of all campaign-level pipeline shown on the manager dashboard.

Metric Definitions as Code

The most common source of dashboard confusion is inconsistent metric definitions. One dashboard counts a “lead” as any form submission. Another counts only marketing-qualified leads. A third counts CRM contacts created this month regardless of source.

Solve this by defining metrics in your dbt models, not in your visualization tool. When the definition of “MQL” lives in a single dbt model that every dashboard queries, you eliminate definitional drift. When the definition changes, you update it once, and every dashboard reflects the new logic automatically.

This approach, sometimes called the metrics layer, is the difference between a warehouse that creates clarity and one that creates new arguments.

Connecting Analytics to Optimization

Dashboards should not just report what happened. They should connect directly to the optimization workflows described in the multi-channel optimization playbook. When a dashboard shows that paid social CPL increased 40% month-over-month, the next click should take the analyst to the campaign-level breakdown with enough granularity to diagnose the cause and take action.

This connection between measurement and action is what separates analytics architecture from analytics tooling. Tools show you numbers. Architecture shows you what to do about them.

Implementation Roadmap

Building a unified analytics layer is a phased project, not a weekend hackathon. Here is the sequence I recommend based on implementations across multiple organizations.

Phase 1: GA4 to BigQuery (Week 1-2). Enable the native GA4 BigQuery export. Build staging models in dbt to clean and standardize the event data. Create a basic session and conversion fact table. Stand up a Looker Studio dashboard that replaces GA4’s native reporting interface.

Phase 2: CRM Integration (Week 3-4). Deploy a CRM-to-BigQuery connector (Fivetran or Airbyte). Build staging models for contacts, deals, and engagements. Implement identity stitching between GA4 client IDs and CRM contacts. Create the fct_conversions table that joins web behavior with CRM outcomes.

Phase 3: Ad Platform Integration (Week 5-6). Connect Google Ads, Meta Ads, and any other paid platforms. Build the fct_spend table. Create blended ROAS calculations that compare warehouse-attributed conversions against platform-reported conversions. This phase often reveals significant discrepancies, which is exactly the point.

Phase 4: Dashboard Rollout (Week 7-8). Build the three-tier dashboard hierarchy. Train stakeholders on the new reporting system. Deprecate legacy reports that pull from individual platform UIs. Establish a cadence for metric definition reviews.

Phase 5: Advanced Analytics (Ongoing). Layer in attribution modeling, marketing mix modeling, predictive LTV scoring, and anomaly detection. These capabilities become possible only after the foundational architecture is in place.

The total investment for a mid-market organization is typically 8-12 weeks of data engineering effort plus ongoing maintenance of approximately 10 hours per week. The return is a measurement system that replaces guesswork with precision and replaces platform-level vanity metrics with business outcomes.

Frequently Asked Questions

How much does a BigQuery-based marketing data warehouse cost to operate?

BigQuery pricing is based on data storage and query volume. For a typical mid-market marketing data warehouse processing 50-100 GB of data per month, expect $200-500 per month in BigQuery costs. The larger expense is the connector tools (Fivetran, Airbyte) which range from $500-2,000 per month depending on data volume and number of connectors. Total infrastructure cost for most organizations falls between $1,000 and $3,000 per month, a fraction of what a single misallocated campaign budget costs.

Can we build this without a dedicated data engineer?

You can build Phases 1 and 2 with a technically skilled marketing analyst who knows SQL. dbt’s documentation and community resources make the learning curve manageable. However, Phases 3-5 and ongoing maintenance benefit significantly from dedicated data engineering support, either in-house or through a specialized partner. The architecture is simple enough to start small and complex enough to warrant professional support as it scales.

How do we handle data privacy and GDPR compliance in a unified warehouse?

Design privacy controls into the architecture from day one. Use BigQuery’s column-level security to restrict PII access. Implement a consent management layer that flags records based on consent status, and build your transformation models to respect those flags. ETL pipelines that handle EU data should hash or pseudonymize personal identifiers before loading them into the warehouse. Your legal team should review the data flows before you go live.

What if our team already uses Snowflake or Redshift instead of BigQuery?

The architectural principles are identical regardless of warehouse platform. The GA4 native export is BigQuery-specific, but tools like Fivetran and Airbyte can replicate GA4 data into any warehouse. Snowflake and Redshift both support dbt, star schemas, and the same dashboard tools. Choose the warehouse that aligns with your existing infrastructure and team expertise.

How long before we see ROI from this investment?

Most organizations see measurable impact within the first quarter. The immediate wins come from eliminating manual reporting reconciliation (saving analyst hours), identifying budget misallocation (reallocating spend to higher-performing channels), and reducing time-to-insight for campaign decisions. Retailers using unified data systems report 15-25% better ad returns and 30% lower customer acquisition costs, and those gains compound as the architecture matures.

Build Your Unified Analytics Layer

A cross-channel analytics architecture is not a luxury for enterprise organizations with dedicated data teams. It is the minimum viable infrastructure for any marketing operation that wants to make decisions based on reality rather than each platform’s self-serving version of the truth.

The technology is accessible. BigQuery is free for small-to-mid workloads. GA4’s native export eliminates the hardest integration. dbt’s open-source framework handles transformation. The barrier is not technology. It is the decision to stop tolerating fragmented measurement.

If your team is ready to build a unified analytics layer, or if you need help designing the architecture for your specific stack, get in touch. I work with marketing teams to design, build, and operationalize the data infrastructure that turns raw platform data into strategic decisions.

About the Author

Andrés Plashal

Senior Marketing Executive and Strategic Revenue & Search Marketing Engineer. $150M+ attributed revenue across 30+ companies. Google Partner since 2017.

Credentials: UIUC Gies College of Business (Behavioral Science), Columbia College Chicago (Interactive Arts & Media). Member: American Marketing Association, GAABS, Paid Search Association. Published researcher (SCTE/NCTA).